Your agent has a skill issue

Plus world models, SKILL.md, and the real MCP vs CLI trade-offs

Markets priced in our coding agents conference. $2B ARR followed.

We’ll share the talks soon so you can see what they knew.

HOT TAKE

Scheduled Lag

Open source is now on a 2-3 month lag behind frontier closed models. That’s not a gap, it’s a release schedule.

For production systems: open source or closed API - what do you ship?

LAST WEEK’S TAKE

Burned Into Memory

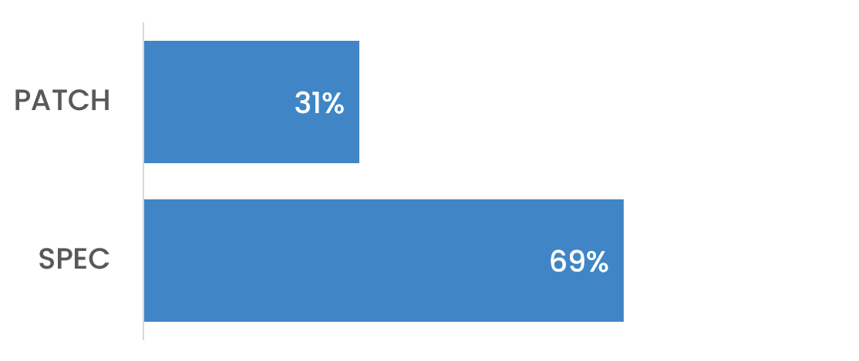

Most of you picked spec change over live patching in prod - the cost of “just this once” has clearly left a mark.

PRESENTED BY DATABRICKS

The DevConnect Global Roadshow is Hitting the Road! 🌍

Get ready to go way beyond the documentation. Databricks DevConnect is officially going global, bringing a high-energy roadshow straight to the world’s top tech hubs.

This is the ultimate destination for data engineering and AI innovation. No high-level fluff, no sales pitches—just raw technical deep dives, architectural breakdowns, and real-world tactical advice from the people actually building the future.

Why DevConnect?

Dive Deep: Tear down complex architectures and master the latest Lakehouse features.

Expand Your Circle: Connect and collaborate with a global community of developers who face the same challenges you do.

Level Up Your Career: Learn the workflows and hacks that make you a faster, sharper, and more effective developer.

Global Tour Dates

The roadshow is moving fast. Secure a spot in your city:

March 12 - San Francisco, CA

March 31 - Bellevue, WA

April 1 - Vancouver, CA

April 14 - Austin, TX

April 16 - Denver, CO

April 28 - Munich, Germany

April 29 - London, UK

HIDDEN GEMS

Curated finds to help you stay ahead

Reducing ML monitoring time by replacing a dense mega-dashboard with tiered alerting and a simplified health-vs-error visual.

Evaluating AGENTS.md context files for coding agents, finding that auto-generated files hurt performance while human-written ones help - but only when they add information the repo doesn’t already contain.

OWASP’s Top 10 security risks for autonomous, tool-using AI agents, offering focused guidance on threats like goal hijack, tool misuse, identity abuse, and supply chain vulnerabilities to help secure deployments.

RL-trained agent generating optimized CUDA kernels, outperforming torch.compile and proprietary models on KernelBench through multi-turn execution feedback.

💡Job of the week

CTO (Founding) // Tingz (Flexible)

Tingz is an early-stage, revenue-generating startup building an AI-driven system that converts natural language prompts into deployable browser games using modular Unity and Godot templates. The founding CTO will set technical direction, design the AI-to-game pipeline, and contribute hands-on to architecture and code.

Responsibilities

Architect prompt-to-game pipeline across templates and plugins

Integrate third-party AI toolkits and external APIs

Define system architecture for compilation and deployment

Review PRs and guide CI/CD implementation

Requirements

Experience leading architecture as CTO or technical co-founder

Production experience with LLMs, agents, and generative models

Designed modular or plugin-based software systems

Comfortable working with Unity C# outputs and pipelines

AGENT INFRASTRUCTURE

MCP vs CLI: the three production questions underneath the argument

Infrastructure engineer Eric Holmes published a post last week arguing MCP is already dying and that most agent workflows are simpler with a terminal toolchain. The core case is practical: models already understand CLI patterns from training data, CLIs behave identically whether a human or an agent is driving them, and the shell is composable in ways MCP tool calls aren’t - pipes, redirects, sampling large datasets with jq or duckdb rather than loading raw payloads into context. Hacker News ran with it, with people describing a probe-first pattern: filter and aggregate at the shell, keep the context window for reasoning.

MCP isn’t going anywhere, though. It’s now under the Linux Foundation, co-governed by Anthropic, OpenAI, and Block - specifically to keep tool integrations neutral and production-ready as agentic systems scale. The more productive read is that the argument is a proxy for three concrete problems teams are actually running into.

Context management. MCP servers often ship as large tool catalogs with verbose descriptions - expensive in tokens and slow in practice. The ecosystem is responding: Claude Code now has MCP Tool Search to defer loading tool definitions until they’re needed. David Cramer (Sentry co-founder, one of the more rigorous public voices on this) argues the real distinction is context quality vs context volume - tool descriptions that steer LLM behavior well rather than just listing parameters. That framing shifts the question from protocol choice to how you design what the model sees.

Auth and config. CLI ecosystems have mature credential handling: profiles, cached tokens, environment variable precedence, well-known file locations. MCP can duplicate all of that - especially for stdio servers - unless the server supports a clean remote OAuth flow and the client manages it properly. The teams with the least friction tend to be the ones who’ve either standardized on cloud CLIs with existing SSO, or built their own MCP servers rather than relying on vendor implementations.

Security boundaries. Letting an agent run CLI tools often means letting it act with your credentials, and how well that’s contained depends on how seriously the team approaches sandboxing and network egress. MCP’s spec puts security and consent front and center, but empirical work on open-source MCP servers has found both conventional vulnerabilities and MCP-specific tool poisoning already in the wild. Neither approach is automatically safer - the real question is where your least-privilege boundary sits, and who owns auth when agents start operating cross-team.

For local, developer-centric, data-heavy work, CLI + good docs is often the fastest path. For workflows that need managed access, centralized policy, and a clean agent/user boundary, MCP remains the more defensible option - particularly as clients add better auth flows and org-level controls. Most teams will end up with both: CLI for local composability and rapid debugging, MCP for cross-team integrations where auditability and blast radius matter.

MLOPS COMMUNITY

Advancing Open source World Models - MLOps Reading Group

A single image turns into a navigable world, and an H100 can spit out short “walkthroughs” for about $1 a clip. This reading group unpacks an open source world model that sits between video generation and game engines, plus the training tricks that make it controllable and cheaper to run.

World model, not video - Camera actions are inputs, so scenes stay more consistent when you look away and return.

Data pipeline that teaches motion - Internet video, gameplay telemetry, and Unreal-rendered synthetic data, captioned at multiple granularities.

Training for interactivity and speed - Diffusion backbone, action-conditioning, longer rollouts, then a distilled causal student with self-rollout.

Unlike standard video generation, actions have persistent effects, forcing the model to maintain a coherent simulated state.

MLOps Coding Skills: Bridging the Gap Between Specs and Agents

Your agent scaffolds the wrong build system, picks the wrong base image, and ignores half your engineering standards. The friction shows up fast when AI meets real production constraints. This piece explores how “Agent Skills” encode team conventions directly into the coding loop.

Encoding standards as agent context - Turn automation and observability guidelines into a

SKILL.mdso agents default tojust, slimuvimages, GitHub Actions, and MLflow without repeated correction.Distilling course material into reusable skill files - Extract patterns from existing documentation and format them as structured context agents can ingest, rather than prose they have to interpret.

Managing layered context - Skills now sit alongside MCP tools and AGENTS.md, creating a new operational surface for teams to maintain.

Formalizing your conventions in code-level context changes how reliably agents produce production-ready output.

IN PERSON EVENTS

VIRTUAL EVENTS

MEME OF THE WEEK

ML CONFESSIONS

Perfectly Wrong

Built a multi-agent pipeline last month.

Gave each agent its own memory, tool access, a clear role definition. Spent three days getting the orchestration right.

On day four I realised the summarisation agent had been silently writing to the wrong output directory the entire time, so every downstream agent had been summarising the previous run’s output.

The pipeline had been running perfectly. On the wrong data. I fixed it, re-ran everything, and the results were nearly identical. I have not told anyone on the team.

Share your confession here.