MCP Before MCP

Plus… latency-aware recommendations, LLM-as-judge reliability, replayable agent traces, and shared workspaces for coding agents

Anthropic’s Mythos sends VCs rushing to plug security market holes.

HOT TAKE

U and I need to chat

Flights, refunds, group trips, price comparisons, dates, filters, payment, identity checks - the moment the task gets real, pure chat starts turning into a clumsy UI with extra steps.

What wins in production: Open chat or structured UI?

LAST WEEK’S TAKE

Knowledge Cap Gap

You’ve got the knowledge to know it’s not the models capping agents.

HIDDEN GEMS

A spec-first view of coding agents making the case that implementations could become rebuildable artifacts, while specs, tests, traces, and edge cases become the durable system memory.

An early TypeScript SDK for wiring ACP, A2A, AG-UI, A2UI, and MCP into one local-first agent development loop, aimed at making agent protocols easier to use together.

LLM-as-judge reliability is tested across bias, formatting sensitivity, calibration, and model-family effects, showing where automated evals need human checks and stronger task design.

A runtime layer for agent execution traces that turns agent runs into typed, replayable histories for forking, supervision, optimization, and faster agent development.

PRESENTED BY QDRANT

Vector Space Day SF: search, agents, and edge AI

On June 11, Qdrant is bringing Vector Space Day to San Francisco for a full day on production-grade AI infrastructure.

The day covers three areas where vector search is becoming more important:

search and retrieval, including multimodal embeddings, video search, retrieval evals, and production search architecture

agents and memory, including context engineering, scalable memory, agentic evals, and memory architectures

edge and robotics AI, including vector search on robots, cameras, edge hardware, and distributed or air-gapped deployments

Speakers include Qdrant, LlamaIndex, Mem0, Arize AI, TwelveLabs, Neo4j, Qualcomm, AWS, Google DeepMind, and more.

Register with code MLOPS-FREE for a free pass (limited availability)

💡Job of the week

Infrastructure Engineer: GPU HPC H200 B300 B200 // Alpha Compute (Remote)

Alpha Compute is hiring an infrastructure engineer to own the lifecycle of its high-density GPU fleet, including H200, B200, and B300 systems. The role covers bare-metal provisioning, fleet health, liquid-cooled environments, telemetry, auto-remediation, NetBox DCIM, and production AI training infrastructure.

Responsibilities

Own GPU fleet health from provisioning to decommissioning.

Manage high-density liquid-cooled rack environments.

Build telemetry and auto-remediation with DCGM.

Lead NetBox DCIM, IPAM, and cabling integrations.

Requirements

Experience managing HPC or production GPU fleets.

Hands-on knowledge of Hopper and Blackwell systems.

Expertise in InfiniBand, NVLink, and NVSwitch.

Strong Linux internals plus Python or Go automation.

AGENT INFRASTRUCTURE

A shared workspace for coding agents

Agor is an open-source workspace for teams running coding agents across the same codebase. It brings Claude Code, Codex, Gemini, OpenCode, Copilot, prompts, terminals, dev environments, PRs, and Git worktrees into one shared surface, so agent work is easier to track once it starts spreading across branches, people, and review flows.

The important choice is the worktree model. Agor treats each piece of agent work as its own isolated unit, with the branch, environment, session history, and review path kept together. That makes sense for teams doing parallel agent-assisted development, where one person might spin up several attempts at the same task, another person might review the output, and someone else might need to pick up the session later without guessing which terminal, prompt, or branch produced the change.

That moves the problem away from “can an agent write code?” and toward a more practical infrastructure question: where does agent work live while it is being created, tested, compared, reviewed, and either merged or discarded?

Agor’s answer is to make the workspace itself the coordination layer. Sessions can be organized visually, work can be grouped by repo or task, and the surrounding context does not vanish into a private terminal scrollback. The repo also includes an MCP server, which makes the workspace programmable from agent sessions rather than only controlled by the human using the UI.

That is the more interesting angle for agent infrastructure. A lot of coding-agent tooling still assumes a fairly personal workflow: one developer, one agent, one branch, one editor. Agor is aimed at the messier team version, where agent runs need ownership, visibility, handoff, and review. The agent output is only part of the system. The rest is state management: branches, environments, prompts, files, session history, comments, PRs, and the path from experiment to reviewed code.

It’s early, and some of the edges show. The discussion around viewing agent-generated code before creating a PR is a good example. Teams want lightweight ways to inspect work inside Agor, but the project is also trying not to become a full IDE. That tension is worth noting because it’s probably the line a lot of agent workspaces will need to walk: enough surface area to coordinate and review work, without rebuilding every developer tool around the agent.

The stronger signal is that Agor is treating coding agents as shared team infrastructure, not just personal productivity tools. As agent-assisted coding gets more common, the missing layer may be less about another model interface and more about the operating surface around the work: how it branches, how it is tracked, who can see it, who can take it over, and how it reaches review without losing the context that produced it.

Agor repo: open-source shared workspace for coding agents

MLOPS COMMUNITY

Building MCP Before MCP Existed: Inside Despegar’s Sofia Agent

An AI travel agent sounds simple until it starts touching real bookings, support flows, WhatsApp chats, and airline edge cases.

Despegar split Sofia into a central orchestration layer, Chappie, with specialist agents for flights, hotels, activities, cars, support, and trip planning.

The team built its own MCP-like connection layer before MCP was available, then added MCP later for newer integrations rather than rewriting everything.

They still rely on orchestration because new tools can hijack prompts when routing is too loose.

The interesting bit is how much of production agent design comes down to keeping the model pointed at the right job.

The Latency Goldilocks Zone Explained

Food recommendations get weird when the system has to suggest what you might like next, not just what you already buy.

The team uses user history, price sensitivity, location, restaurant fit, and item profiles to decide when to exploit known preferences and when to test something new.

Conversational ordering changes the search problem, especially when a user asks for constraints that filters handle badly, like delivery time, budget, dietary needs, and taste.

Latency is treated as both an engineering problem and a UX problem, with different tradeoffs for app, WhatsApp, and voice.

The hard part is making the recommendation feel obvious after the system has done a lot of hidden work.

IN PERSON EVENTS

San Francisco - May 15

NYC - May 20

Stockholm - May 26

Paris - May 27

San Francisco - May 27

London - May 28

NYC - May 28

NYC - June 4

London - June 4

VIRTUAL EVENTS

Coding Agents Lunch & Learn - May 15

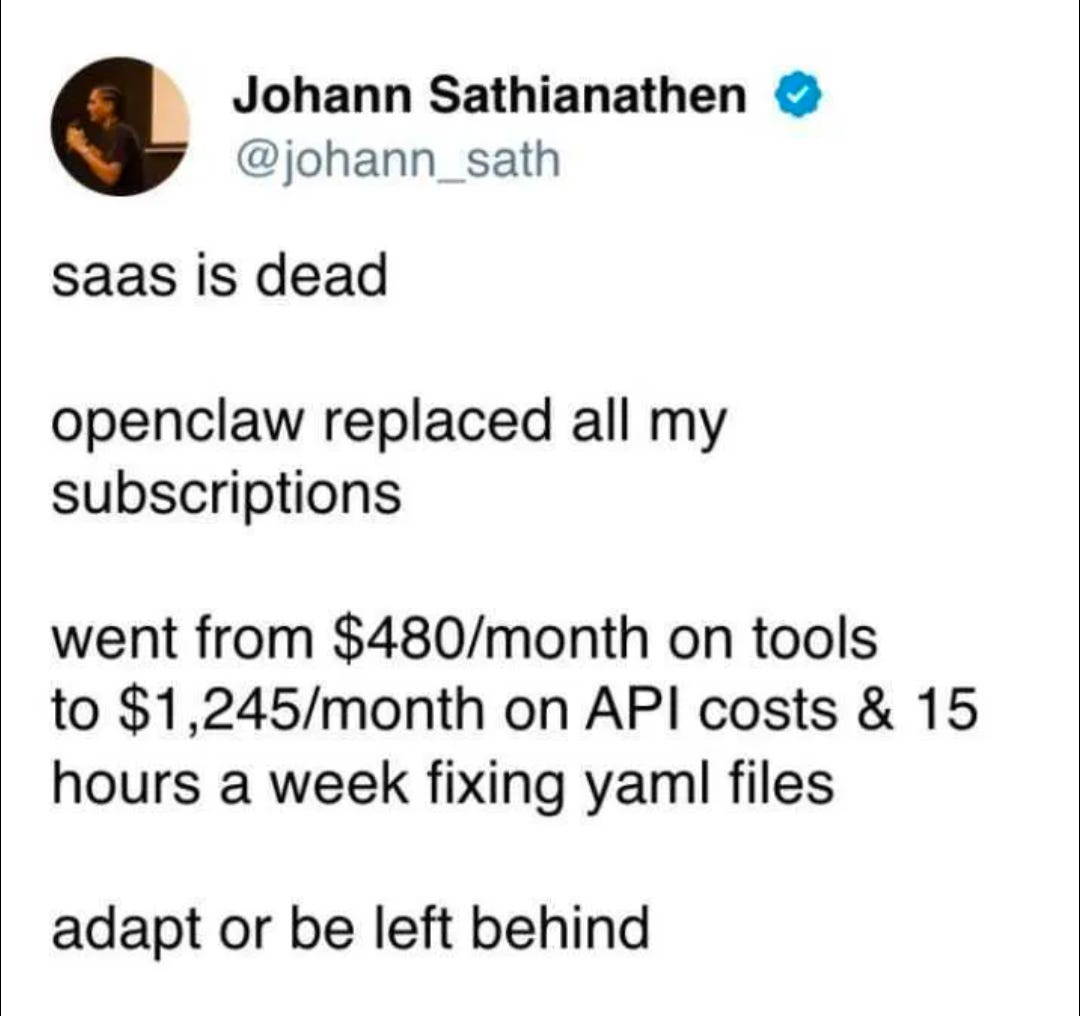

MEME OF THE WEEK

ML CONFESSIONS

Pilot, grounded

I once helped build an eval suite for a customer support agent and got very excited when it jumped from “concerning” to “ready for pilot” in a week.

The prompts were cleaner, the retrieval looked sharper, and the agent had started giving these beautifully specific answers about refunds, account limits, and edge-case policy exceptions. I wrote a short update saying the context layer was finally working.

Then someone from support asked why the agent kept referencing ticket notes that were never shown to the customer.

Turns out our eval cases were cloned from real support tickets, and the agent had permission to search the internal ticket system during evaluation. It wasn’t reasoning through the scenarios. It was finding the original answers.

We renamed the dashboard from “agent accuracy” to “eval harness coverage” and spent the next sprint removing half the context it had been praised for using.

Share your confession here.

I’m mapping what “AI readiness” means for engineering orgs adopting coding agents. Curious what platform teams are seeing: is the bottleneck repo context, CI/CD guardrails, task quality, security, or governance?